You can read our other entries on the subject: UI, Describing Images, and User Testing

Astute readers our last two entries will notice that we haven't mentioned the Definitions Area, and that's for good reason. The programs in the Interactions Area are so short that there's no harm in having the computer read out each symbol or keyword. But that ignores a much, much more difficult problem: how does a screenreader navigate programs that are dozens, hundreds or even thousands of lines long?

In our first post on the subject, we said the first step in making a User Interface accessible is annotating it so that a screen-reader can discern the structure of the UI. Structure is important for usability, which is why our computers use all sorts of visual metaphors to structure a program into menus, palettes, ribbons, etc. Even huge blocks of text have structure: books have chapters, chapters have pages, and pages have paragraphs. DVDs are divided into chapters to make it easy to jump around in a movie, and anyone who's tried to jump to a scene in an on-demand movie knows how difficult navigation can be when all you have is "fast forward"!

So how do we build structure for code? One option is to do everything with line numbers, so a blind user can navigate to line 731 and have the computer start reading. Of course, this requires the programmer to keep a lot of line numbers in their head, essentially mapping numbers to semantic elements in code. It's doable, but it's an added burden. And every time new code is inserted, the line numbers of all the code after that line will change as well.

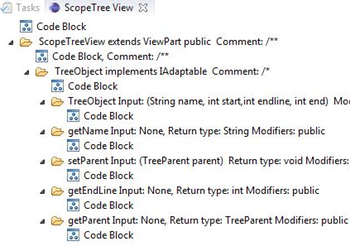

A better option is to parse the program itself, converting the raw text into an Abstract Syntax Tree that describes the program itself. Just as a book can be divided into chapters, a program can be divided into class definitions, function definitions, etc. It would be great for a blind user to get a tree-view of their well-formed program, and then dig deeper into one of them to hear each line of code. This approach has already shown promise, thanks to the incredible work of Catherine Baker, Lauren Milne, and Richard Ladner. Their StructJumper program used a similar solution to allow blind programmers to navigate well-formed Java programs, and they found that several common tasks were made easier with a tree view (you can check out a demo here!). With a little elbow grease, we can hook our compiler front-end up to our UI, do the same thing StructJumper does in the browser, using ARIA annotations!

A better option is to parse the program itself, converting the raw text into an Abstract Syntax Tree that describes the program itself. Just as a book can be divided into chapters, a program can be divided into class definitions, function definitions, etc. It would be great for a blind user to get a tree-view of their well-formed program, and then dig deeper into one of them to hear each line of code. This approach has already shown promise, thanks to the incredible work of Catherine Baker, Lauren Milne, and Richard Ladner. Their StructJumper program used a similar solution to allow blind programmers to navigate well-formed Java programs, and they found that several common tasks were made easier with a tree view (you can check out a demo here!). With a little elbow grease, we can hook our compiler front-end up to our UI, do the same thing StructJumper does in the browser, using ARIA annotations!

This approach (and StructJumper's) falls apart, however, if a program has even a tiny syntax or parse error. If a thousand-line program doesn't parse, we can't build a parse tree. And without that parse tree, we're stuck with a thousand lines of un-structured code! To improve accessibility for visually-impaired users, we need to do everything possible to construct a parse tree at all times.

This is one of those times where seemingly-disconnected pieces bump up against one another quite closely: some programming languages are more easily parseable than others! The Racket language we use in Bootstrap:Algebra is particularly easy to parse, provided that every expression has an opening and closing parenthesis. Any expression of the form (f e1 ... en) could be the function f applied to arguments e1 through en. Some expressions parse to other forms, such as (if e1 e2 e3), and will generate a parse error if they don't have the right number of elements. When presented with an error, however, the parser can treat the expression as "unknown" and continue parsing the elements within. As long as the brackets are matched, we can always generate something. And that something can be annotated, searched and navigated.

So how do we ensure that parentheses are always matched? One option is to use some kind of autocompleting software that inserts a closing paren whenever an open paren is typed, but this is a fragile solution. We're betting that the real solution for the visually-impaired is actually hiding in the last place anyone would look: block-based programming. And no, not the blocks you're thinking of (not even close!). We'll get to that subject in our next series of blog posts...stay tuned!

This initiative is made possible by funding from the National Science Foundation and the ESA Foundation. We'd also like to extend a huge thank-you to Sina Bahram and Paul Carduner for their incredible contributions, support and patience during this project.

Posted April 30th, 2017